Abdelhamid

SAIDI

About Me

Second year Software Engineering student at FST Settat with a strong focus on data engineering and analytics. I design and optimize databases, build scalable data pipelines, and transform raw data into actionable insights.

Through hands-on projects in data warehousing, analytics, and cloud-based architectures, I apply modern tools and best practices to develop efficient, reliable, and insight-driven data solutions. Passionate about turning complex datasets into strategic value, I’m continuously learning and refining my technical expertise in data systems.

Open to connecting, collaborating, and exchanging ideas with professionals and peers in the data and technology space.

To transform raw data into actionable insights that drive business growth and innovation.

Experience

Data Engineering Intern

Built a unified administrative database by integrating and harmonizing data from multiple government systems, implemented Python-based deduplication and normalization to ensure clean, unique, and searchable records, and optimized relational database indexing, data structures, interoperability, and overall data consistency.

Data Science & Analytics Intern

Built and analyzed datasets by integrating and cleaning data from multiple sources using Python. Implemented web scraping, data cleaning, and exploratory data analysis (EDA) to generate actionable insights. Designed and created visualizations and reports to communicate patterns, trends, and anomalies effectively.

Technical Skills

Programming Languages

Data Engineering & Architecture

Big Data & Analytics Platforms

Databases & Data Engineering

Orchestration, DataOps & Workflow

Data Visualization & Reporting

Certifications & Credentials

Education

Engineer's degree in Computer Engineering

Engineering studies with focus on Computer Science and Software Development

Physics & Engineering Sciences

Intensive preparatory program focused on mathematics, physics, and engineering fundamentals.

Featured Projects

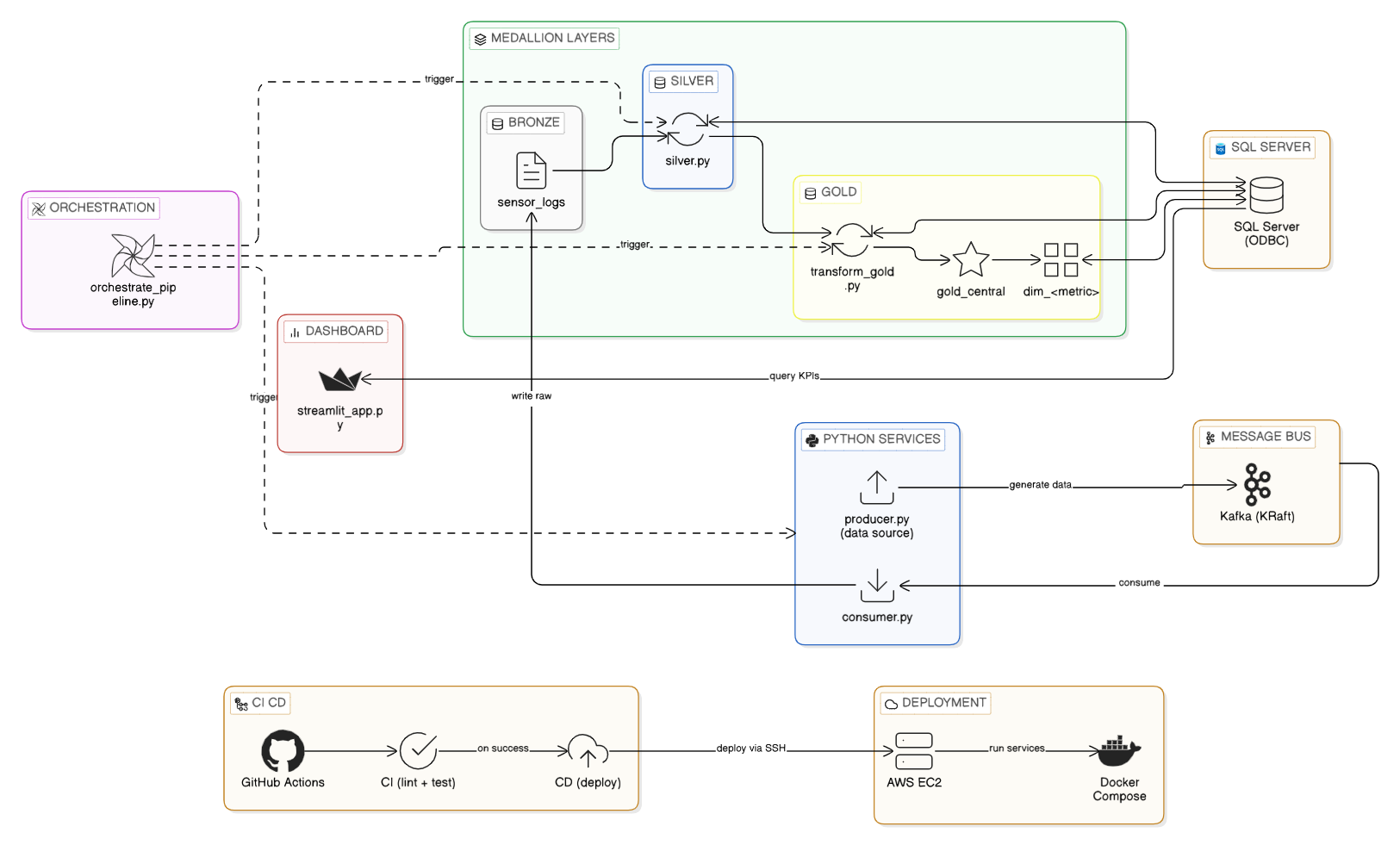

End-to-end streaming pipeline for IoT sensor data. Includes Docker/Docker Compose setup for local development.

End-to-end ELT pipeline extracting TPCH orders into Snowflake and transforming with dbt, orchestrated by Apache Airflow.

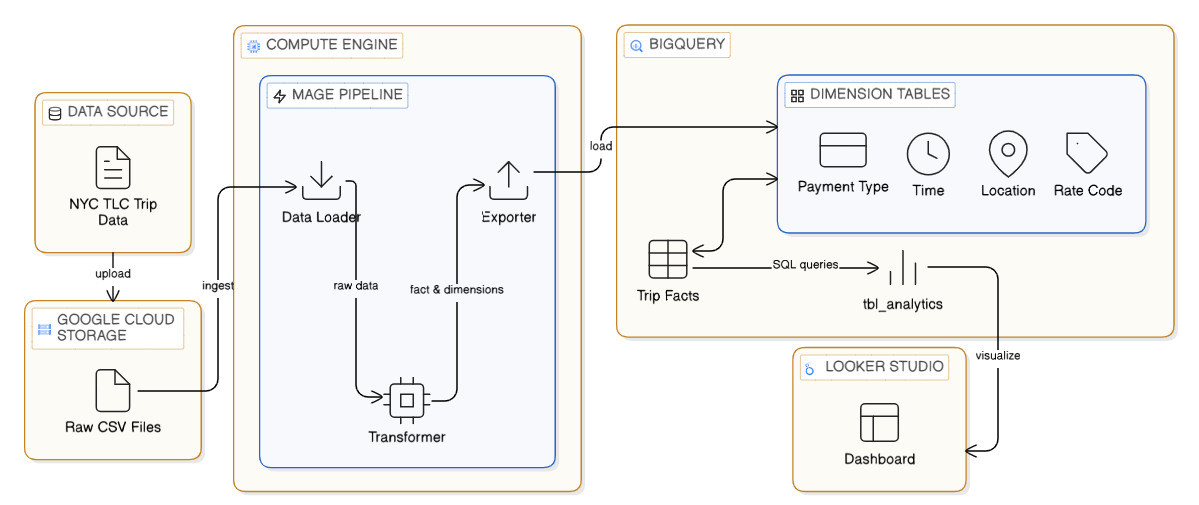

Built an end-to-end GCP pipeline with Mage, BigQuery, and Looker Studio for automated data ingestion and real-time analytics.

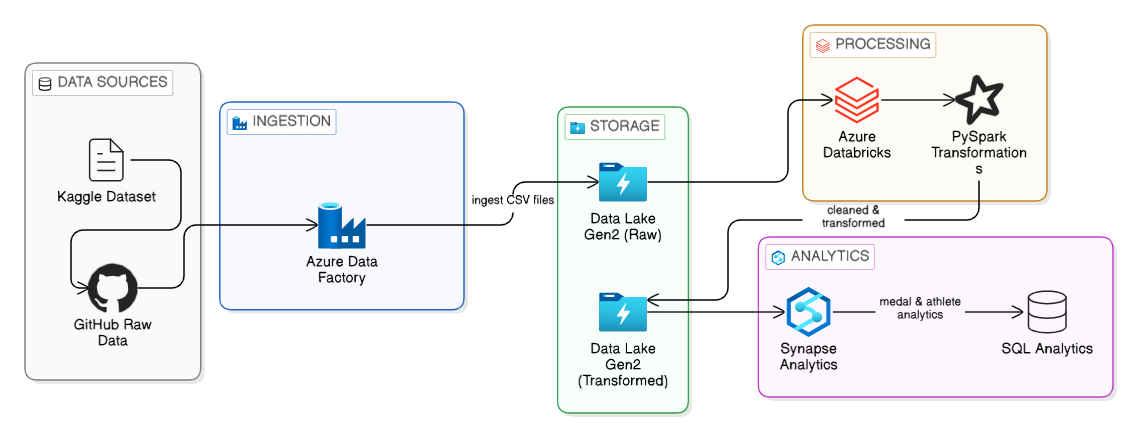

End-to-end Azure ETL and analytics pipeline processing Tokyo 2020 Olympics data using Data Factory, Databricks, and Synapse.

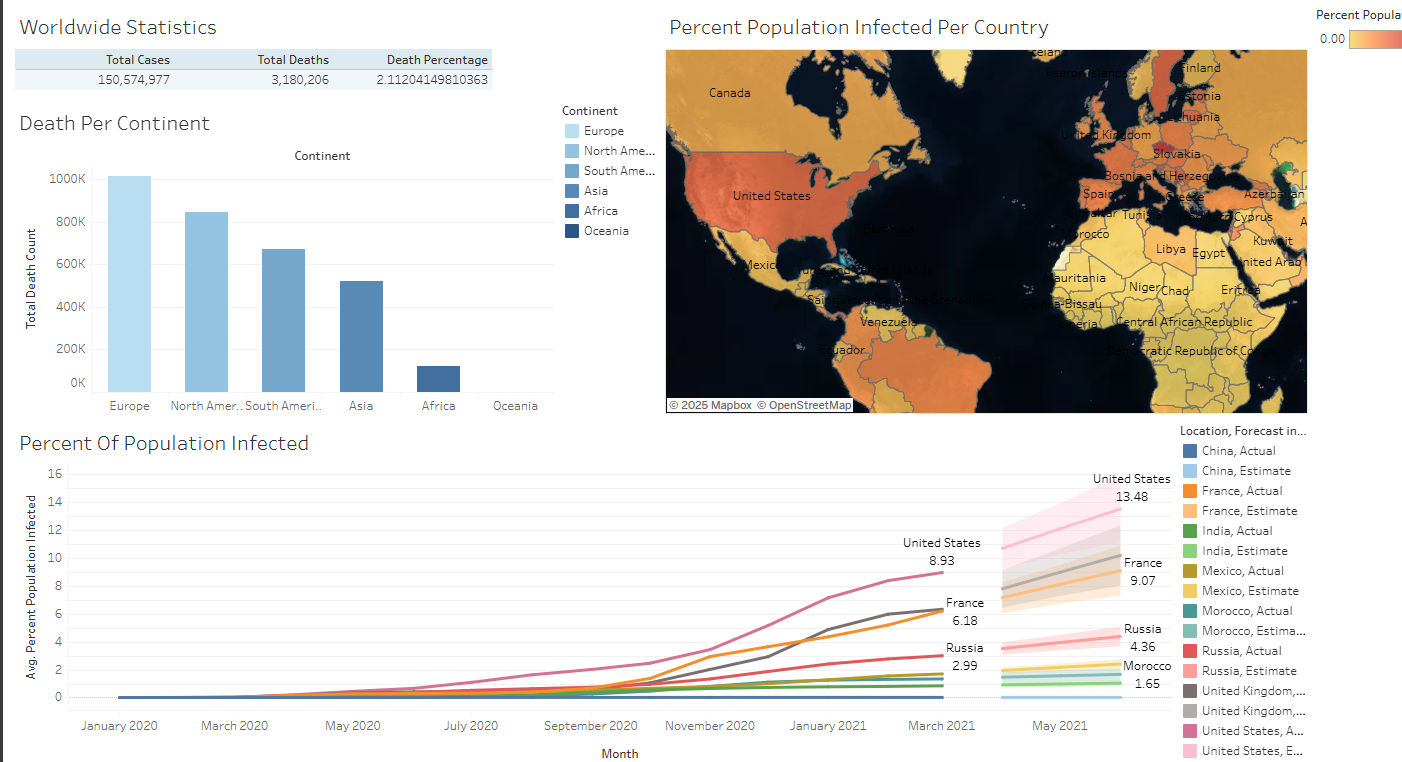

Comprehensive exploration of global COVID-19 data (2020–2021) with SQL analytics and Tableau dashboards highlighting key pandemic patterns.